Information Retrieval Part 4 (sigh): Grounding & RAG

In an age of slop, grounding is our lord and saviour. It can significantly reduce hallucinations and is far cheaper than retraining models. But do you need to care?

If any of you have been exposed in any way to the manosphere, you’ll know that grounding is not just a way of anchoring LLM outputs to ‘trusted’ external sources. It is the ‘principle’ of putting your feet on the ground in the morning and feeling the grass beneath your feet.

For the convenient price of £1500 an hour, people like Peter Attia and Andrew Huberman can teach how to do it. As long as they aren’t busy on a notorious paedophile’s Caribbean island I mean.

Allegedly.

But we’re boring. Grounding to us isn’t an ancestral tenet. We don’t eat pig testicles for breakfast. It’s all AI baby. When we’re talking about grounding, we mean fact-checking the hallucinations of planet destroying robots and tech bros.

If you want a non-stupid opening line, when models accept they don’t know something, they ground results in an attempt to fact check themselves.

Happy now?

Ironically, fact checking would be incredibly valuable for the manosphere. But maybe they need someone else to do it for them.

TL;DR

LLMs don’t search or store sources or individual URLs, they generate answers from pre-supplied content.

RAG anchors LLMs in specific knowledge backed by factual, authoritative and current data. It reduces hallucinations.

Retraining a foundation model or fine-tuning it is computationally expensive and resource-intensive. Grounding results is far cheaper.

With RAG, enterprises can use internal, authoritative data sources and gain similar model performance increases without retraining. It solves the lack of up-to-date knowledge LLMs have (or rather don’t).

What is RAG?

RAG - Retrieval Augmented Generation - is a form of grounding and a foundational step in answer engine accuracy. LLMs are trained on vast corpuses of data and every dataset has limitations. Particularly when it comes to things like newsy queries or changing intent.

When a model is asked a question it doesn’t have the appropriate confidence score to answer accurately, it reaches out to specific trusted sources to ground the response. Rather than relying solely on outputs from its training data.

By bringing in this relevant, external information, the retrieval system identifies relevant, similar pages/passages and includes the chunks as part of the answer.

This provides a really valuable look at why being in the training data is so important. You are more likely to be selected as a trusted source for RAG if you appear in the training data for relevant topics.

It’s one of the reasons why disambiguation and accuracy are more important than ever in today’s iteration of the internet.

Why do we need it?

Because LLMs are notoriously hallucinatory. They have been trained to provide you with an answer. Even if the answer is wrong.

Grounding results provides some relief from the flow of batshit information.

All models have a cutoff limit in their training data. They can be a year old or more. So anything that has happened in the last year would be un-answerable without the real time grounding of facts and information.

Once a model has ingested a sizeable amount of training data, it is far cheaper to rely on a RAG pipeline to answer new information rather than re-training the model.

Dawn Anderson has a great presentation called You Can’t Generate What You Can’t Retrieve. Well worth a read, even if you can’t be in the room.

Do grounding and RAG differ?

Yes. RAG is a form of grounding.

Grounding is a broad brush term applied used to apply to any type of anchoring AI responses in trusted, factual data. RAG achieves grounding by retrieving relevant documents or passages from external sources.

In almost every case you or I will work with, that source is live web search.

Think of it like this;

Grounding is the final output - ‘please stop making things up.’

RAG is the mechanism. When it doesn’t have the appropriate confidence to answer a query, ChatGPT’s internal monologue says ‘don’t just fucking lie about it, verify the information.’

So grounding can be achieved through fine tuning, prompt engineering or RAG

RAG either supports its claims when the threshold isn’t met or finds the source for a story that doesn’t appear in its training data

Imagine a fact you hear down the pub. Someone tells you that the scar they have on their chest was from a shark attack. A hell of a story. A quick bit of verifying would tell you that they choked on a peanut in said pub and had to have a nine hour operation to get a part of their lung removed.

True story - and one I believed until I was at university. It was my dad.

There is a lot of conflicting information out there as to what web search these models use. However, we have very solid information that ChatGPT is (still) scraping Google’s search results to form its responses when using web search.

Why can no-one solve AI’s hallucinatory problem?

A lot of hallucinations make sense when you frame it as a model filling the gaps. The fails seamlessly.

It is a plausible falsehood.

It’s like Elizabeth Holmes of Theranos infamy. You know it’s wrong, but you don’t want to believe it. The you here being some immoral old media mogul or some investment firm who cheaped out on the due diligence.

“Even as language models become more capable, one challenge remains stubbornly hard to fully solve: hallucinations. By this we mean instances where a model confidently generates an answer that isn’t true.”

That is a direct quote from OpenAI. The hallucinatory horses’ mouth.

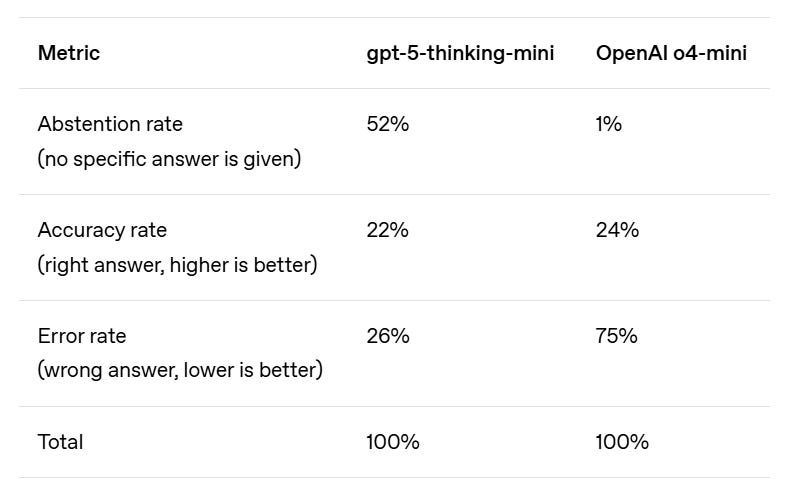

Models hallucinate for a few reasons. As argued in OpenAI’s most recent research paper, they hallucinate because training processes and evaluation reward an answer. Right or not.

If you think of it in a Pavlovian conditioning sense, the model gets a treat when it answers. But that doesn’t really answer why models get things wrong. Just that the models have been trained to answer your ramblings confidently and without recourse.

This is largely due to how the model has been trained.

Ingest enough structured or semi-structured data (with no right or wrong labelling) and they become incredibly proficient at predicting the next word. At sounding like a sentient being.

Not one you’d hang out with at a party. But a sentient sounding one.

If a fact is mentioned dozens or hundreds of times in the training data, models are far less-likely to get this wrong. Models value repetition. But seldom referenced facts act as a proxy for how many ‘novel’ outcomes you might encounter in further sampling.

Facts referenced this infrequently are grouped under the term the singleton rate. In a never before made comparison, a high singleton rate is a recipe for disaster for LLM training data, but brilliant for Essex hen parties.

According to this paper on why language models hallucinate:

“Even if the training data were error-free, the objectives optimised during language model training would lead to errors being generated.”

Even when the training data is 100% error-free, the model will generate errors. They are built by people. People are flawed and we fucking love confidence.

Several post-training techniques - like reinforcement learning from human feedback or, in this case, forms of grounding - do reduce hallucinations.

How does RAG work?

Technically, you could say that the RAG process is initiated long before a query is received. But I’m being a bit arsey there. And I’m not an expert.

Standard LLMs source information from their databases. This data is ingested to train the model in the form of parametric memory (more on that later). So, whoever is training the model is making explicit decisions about the type of content that will likely require a form of grounding.

RAG adds an information retrieval component to the AI layer. The system:

➡️ Retrieves data

➡️ Augments the prompt

➡️ Generates an improved response.

A more detailed explanation (should you want it) would look something like:

The user inputs a query and it’s converted into a vector

The LLM uses its parametric memory to attempt to predict the next likely sequence of tokens

The vector distance between the query and a set of documents is calculated using Cosine Similarity or Euclidean Distance

This determines whether the model’s stored (or parametric) memory is capable of fulfilling the user’s query without calling an external database

If a certain confidence threshold isn’t met, RAG (or a form of grounding) is called

A retrieval query is sent to the external database

The RAG architecture augments the existing answer. It clarifies factual accuracy or adds information to the incumbent response

A final, improved output is generated

If a model is using an external database like Google or Bing (which they all do), it doesn’t need to create one to be used for RAG.

This makes things a shit ton cheaper.

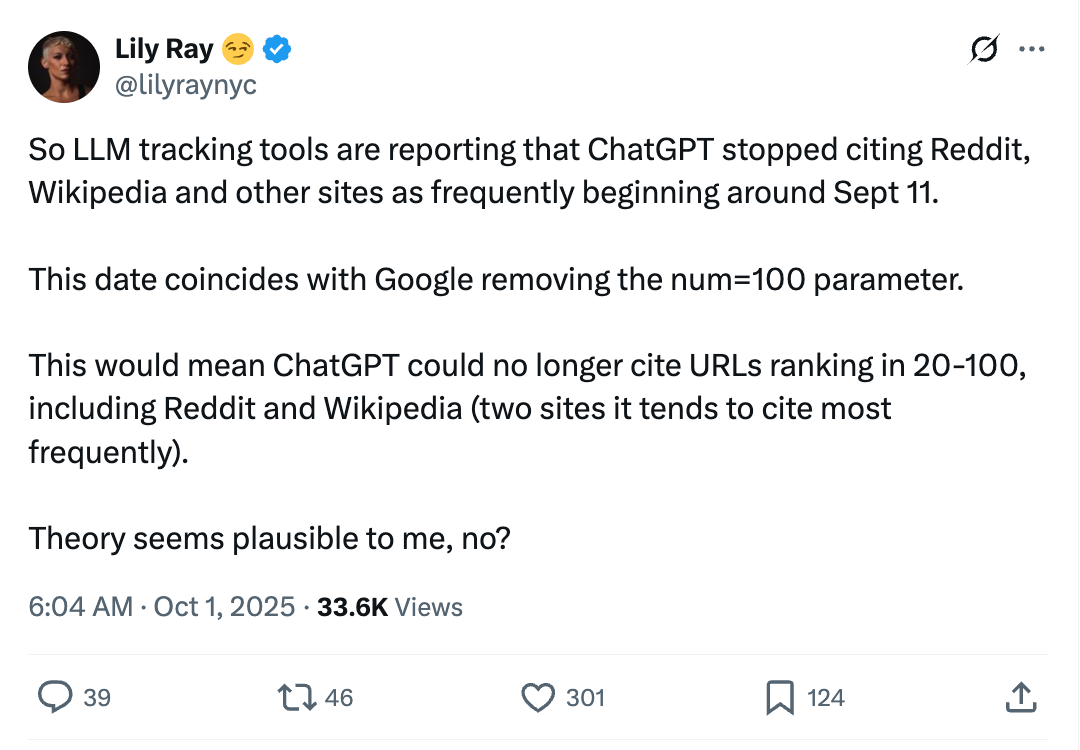

The problem the tech heads have is that they all hate each other. So when Google dropped the num=100 parameter in September 2025, ChatGPT citations fell off a cliff. They could no longer use their third party partners to scrape this information.

It’s worth noting that more modern RAG architectures apply a hybrid model of retrieval, where semantic searching is run alongside more basic keyword type matches. Like updates to BERT (DaBERTa) and RankBrain, this means the answer takes the entire document and contextual meaning into account when answering.

Hybridisation makes for a far superior model. In this agriculture case study, a base model hit 75 percent accuracy, fine-tuning bumped it to 81 percent, and fine-tuning + RAG jumped to 86 percent.

Parametric vs non-parametric memory

A models parametric memory is essentially the patterns it has learned from the training data it has greedily ingested.

During the pre-training phase, the models ingest an enormous amount of data - words, numbers, multi-modal content etc. Once this data has been turned into a vector space model, the LLM is able to identify patterns in its neural network.

When you ask it a question, it calculates the probability of the next possible token and calculates the possible sequences by order of probability. The temperature setting is what provides a level of randomness.

Non-parametric memory stores (or accesses) information in an external database. Any search index being an obvious one. Wikipedia, Reddit etc too. Any kind of ideally well structured database. This allows the model to retrieve specific information when required.

RAG methodologies are able to ride these two competing, highly complementary disciplines.

Models gain an ‘understanding’ of language and nuance through parametric memory.

Responses are then enriched and/or grounded to verify and validate the output via non-parametric memory.

Higher temperatures increase randomness. Or ‘creativity.’ Lower temperatures the opposite.

Ironically these models are incredibly uncreative. It’s a bad way of framing it, but mapping words and documents into tokens is about as statistical as you can get.

Why does it matter for SEO?

If you care about AI search and it matters for your business, you need to rank well in search engines. You want to force your way into consideration when RAG searches apply.

You should know how RAG works and how to influence it.

If your brand features poorly in the training data of the model, you cannot immediately change that. Well, for future iterations you can. But the model’s knowledge base isn’t updated on the fly.

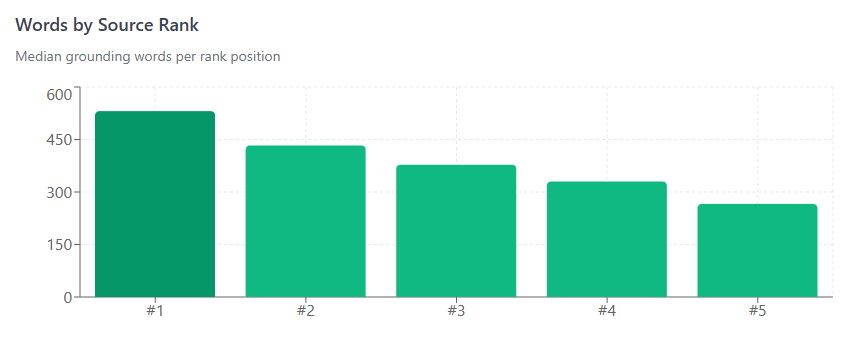

So you rely on featuring prominently in these external databases in order to be part of the answer. The better you rank, the more likely you are to feature in RAG-specific searches.

I highly recommend watching Mark Williams-Cook’s From Rags to Riches presentation. It’s excellent. Very reasonable and gives some clear guidance on how to find queries that require RAG and how you can influence them.

Basically, again, you need to do good SEO

Make sure you rank as high as possible for the relevant term in search engines

Make sure you understand how to maximise your chance of featuring in an LLMs grounded response

Over time, do some better marketing to get yourself in the training data

All things being equal, concisely answered queries that clearly match relevant entities that add something to the corpus will work. If you really want to follow chunking best practice for AI retrieval, somewhere around 200-500 characters seems to be the sweet spot.

Smaller chunks allow for more accurate, concise retrieval. Larger chunks have more context, but can create a more ‘lossy’ environment, where the model loses its mind in the middle.

Top tips (same old)

I find myself repeating these at the end of every training data article, but I do think it all remains broadly the same.

Answer the relevant query high up the page (front-loaded information)

Clearly and concisely match your entities

Provide some level of information gain

Avoid ambiguity, particularly in the middle of the document

Have a clearly defined argument and page structure, with well structured headers

Use lists and tables. Not because they’re less resource intensive token-wise, but because they tend to contain less information

My god be interesting. Use unique data, images, video. Anything that will satisfy a user.

Match their intent.

As always, very SEO. Much AI.

I love these series! I need to read all chapters now :)

Another good article, thank you for sharing!